If you’re reading this you’ve probably come from The Short Version article on Serverless Static WordPress on AWS for $0.01 a day – if you haven’t read that I’d recommend checking it out first before I go into excessive detail on how this works.

A quick recap

Serverless Static WordPress is a Community Terraform Module from TechToSpeech that needs nothing more than a registered domain name with its DNS pointed at AWS.

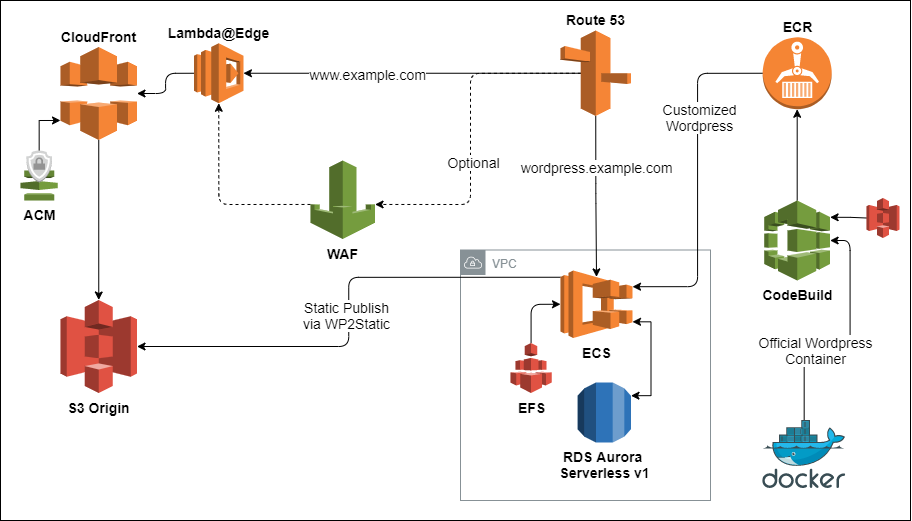

It creates a complete infrastructure framework that allows you to launch a temporary, transient WordPress container. You then log in and customise it like any WordPress site, and finally publish it as a static site fronted by a global CloudFront CDN and S3 Origin. When you’re done you shut down the WordPress container and it costs you almost nothing.

A history of hosting

If you didn’t want to hear the self-indulgent waffling of an aging nerd you’ve come to the wrong place. Skip to the good stuff about this module if that’s not your bag.

I built my first website in 1998, back when the internet was filled with free sites like GeoCities and other competitors whose main distinction was whether they offered you more than 1 megabyte of hosting space. That’s megabyte.

By 2007 I was hosting maybe 30-40 small websites for customers and paying £120 (US$170) a month for a feeble dedicated server with 2GB Memory. Checking my emails from the time it seems I was suspicious at getting such a good deal, as many other quotes I’d obtained were much higher.

Skip to 2015 and I’m rejoicing at having migrated my server to AWS. This linked article is a weird time-capsule ramble on how my hosting had changed over 15 years, and it’s funny to look back to 6 years ago to see how I perceived AWS after only using it for 12 months.

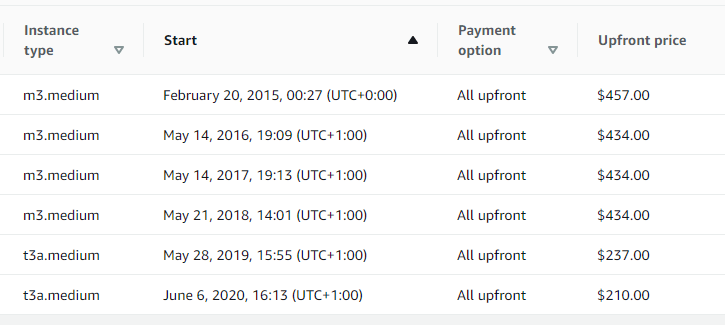

Fast-forward to today and my last Reserved Instance for my hosting server just expired. I won’t be buying one of these again (at least, not for this purpose!).

As you can see, the price of my hosting has only decreased over time. So too has the number of my customers, as I’ve been steadily and purposefully shedding them as I switched out casual business hosting and focused more on specialised DevOps. With CPanel licencing and some other usage fees I estimated my total spend to be roughly $600 a year to host around 30 websites of varying popularity.

As you can see, the price of my hosting has only decreased over time. So too has the number of my customers, as I’ve been steadily and purposefully shedding them as I switched out casual business hosting and focused more on specialised DevOps. With CPanel licencing and some other usage fees I estimated my total spend to be roughly $600 a year to host around 30 websites of varying popularity.

The main reason for this self-indulgent trip down memory lane is mostly to highlight how the explosion of cloud in the last 10 years has just made everything cheaper and I wasn’t even trying that hard.

So I decided to try.

Static Websites and the Serverless craze

Front-end frameworks are all the rage. It’s been a long time since I built a website as a (largely back-end) developer. It took me a while before I realised that jQuery was no longer the hottest new thing. Are we sure it still isn’t?

I’m reliably informed the battle is now being fought by VueJS, React, and Angular, in that order of preference. I know almost nothing about these things. But it means you can have a fast, static front-end that performs dynamic calls to a backend hosted on something so that’s so logically abstracted that we now call it ‘Serverless’.

Everything runs on a server somewhere of course, but the difference is now that you don’t have to care about that very much. The compute resource you need to perform actual work is getting so commoditised that as a developer it’s just a question of where the API is that you call. The pesky detail of where this might run is left to DevOps engineers. Since all DevOps are doing is utilising another abstraction provided by people that actually put physical hardware in racks, and power, cool, and monitor it, it’s mind-boggling to realise there’s multiple levels deeper still. Just how does a processor even work anyway?

But back to static websites – they’re a thing. Yet WordPress, as a dynamic PHP-driven service, has remained incredibly popular in certain and not insignificant markets. It alleges to dwarf its competitors who offer templated websites leveraging simple content management systems (CMS) for small businesses. Whether you love it or hate it, WordPress is awesomely flexible, with thousands of plugins to choose from offering near-total customisation. I’ve always used it, I still want to – but I also want to get some of this ‘serverless’ and ‘static website’ goodness too. I simultaneously want to save as much money as possible.

Serverless Static WordPress on AWS – The Terraform Module

These were my requirements:

- I don’t want to run or maintain any servers.That includes anything in EC2.

- I need to be able to run WordPress to modify my site.

- I need to be able to publish it statically. It must be easy to do.

- I want this to cost as little as possible all the time I’m not doing anything to it, or whenever it’s not receiving any traffic (95% of the time!).

- I want SSL, and a Global CDN.

This is how I went about satisfying them:

WordPress in a Docker container was obvious. But how? AWS provides numerous ways to run a container. Elastic Compute Service (ECS) in Fargate mode fits my requirements the best, and I can even launch it on Fargate Spot, making the container cost mere fractions of a cent. I can scale its CPU and Memory based on my requirements.

So I start there – I push the official WordPress docker container into ECR and launch it as a Task. I grab the public IP from the console and try to access it. That works to get to the installer screen, but now what? WordPress needs a database, so I manually launch an Aurora Serverless v1 RDS cluster using the mySQL engine and plug in the details, and it connects.

But wait – if I want this to actually be consistent across launches, I’m going to need some persistent storage for the WordPress installation files plus any plugins or content I upload. EFS fits this nicely and is integrated with Fargate. I’m using General Purpose performance mode rather than Max I/O, and default Bursting Throughput – it seems sufficient but I really should performance test this some time. This is only going to serve one concurrent user, after all.

Manually running an ECS Task is painful, so I put it behind a proper ECS Service within a Cluster. That way if the Task dies it’ll automatically scale back up to its desired size. It also makes toggling it on and off really easy. But the Public IP changes every time it’s launched – that’s annoying and going back to the console every time to look it up is going to get old fast. Frustratingly, there’s no way to assign a static IP to a Fargate task. I moan about with others on the Containers Roadmap and think of a solution. We could, but definitely don’t want to, put an Elastic Load Balancer in front of the ECS service. We’re trying to save money here and an ALB would be disproportionately expensive!

I figure I can get the container to query itself for its public IP address, and then update a fixed DNS record in Route53 to point to whatever that is. I need to customize the Docker Entrypoint script of the WordPress container so it can run the necessary CLI commands to do the query and push the update. The Task Role of the container will need the necessary IAM permissions attached to it too. I need to build on top of the original WordPress container, so I do that locally and push it to ECR, but also think I’m really going to need to automate this docker build at some point later.

I figure out how to enable the Task Metadata Endpoint in Fargate, but then cry in frustration that, for some reason, this does NOT expose the Public IP of the Task.

I work out how to query the same data from the Elastic Network Interface attached to the Task via its private IP address, and voila – when the container launches it’ll update the DNS so I can reliably access it without messing around looking up values in the AWS console.

But all of my pulling from Dockerhub in testing has made me hit its strict limits on pulling images. This must be a problem a lot of people have, so I publish a small Terraform module to work around it so I can keep going without such silly impediments.

So I’ve got a scrappy version of WordPress running but it’s completely uncustomised and really slow. I install a plugin to let me modify PHP ini values (reminding myself to automate this too, later) so it can better utilise the tiny fraction of CPU and Memory I’ve assigned to this test container. Then I need to move onto the difficult part here – the Static Publishing to S3.

Leon Stafford’s WP2Static Plugin

I’d searched around for static WordPress plugins, all of which seemed to be either commercial, low quality, or lacked the integration necessary to avoid the ‘publish’ part of the process being prohibitively slow. Then I found static-html-output, this turned out to be one of several Static WordPress plugins maintained by Leon Stafford. This dude is a true digital nomad, who will code just as happily from a coffee shop, a library, or a tent if that’s where he is that week. A great guy with an earnest commitment to the purest form of open-source ideals.

WP2Static looks to be the most promising of his works and it has an S3 Add-on, and so I immediately begin testing it and have some really productive conversations back and forth with Leon on various issues. After a couple of tweaks and many, many hours of PHP fatally crashing inside the container (cause: the container was too under-specced), I’m able to complete a publish workflow into a public S3 website bucket.

Seriously, if you get any value from this Terraform module at all, go and sponsor Leon. Without his work on the plugin this is all just a very complicated and futile exercise in AWS infrastructure.

Very manual and very painful

So I’ve got the PoC of this effort up and running, but that more readily stands for ‘Piece of Crap’ rather than ‘Proof of Concept’ at this point.

I start integrating everything I’ve done into Terraform. ECS Service, Task, IAM Role, ECR repository, Container Definition, check.

I really need to automate this docker build as I want to independently build and own the customised image I’m creating, so I implement a CodeBuild pipeline which pulls my base image from ECR (tagged: base), and rebakes it with my modifications, and then pushes it back to ECR (tagged:latest). This takes several iterations and many, many failed builds to get this right, but I’ve now got a little bit of CI for the workflow.

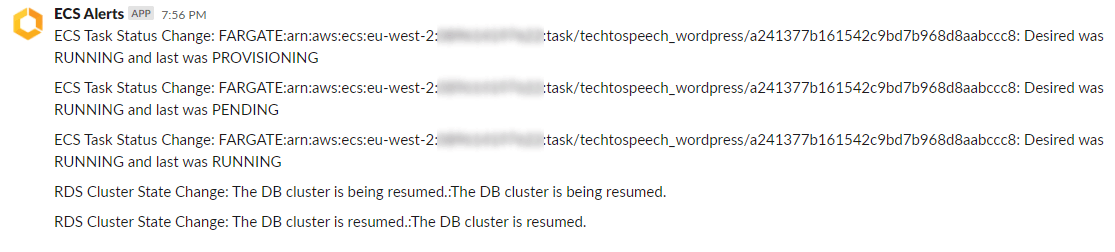

I decided I’d like to know about events happening in Fargate and RDS to visualise the lifecycle flow when I launch the container, and EventBridge is a great service for capturing these transitions. I adapt a Lambda function to consume EventBridge events and ping them out to Slack with some relevant information. There’s a minor typo in the AWS documentation that covers a part of this, so I submit a PR and can now pretend I’m an official AWS docs contributor.

The bucket I’m publishing to is a static website bucket which isn’t supported by CloudFront if I want to make the bucket itself private (and I do). So we use an Origin Access Identity to lock the bucket down to everything except CloudFront. Great! But there’s another problem with this…. If it’s not a public static website bucket, it’s not using default index pages in anything other than the Distribution root. That means if I have an index.html in a sub-directory, it won’t be loaded by default. Luckily, there’s an established workaround for this and now I’m integrating Lambda@Edge to CloudFront too.

I need SSL, so I use Amazon Certificate Manager (ACM) for a free certificate which is validated by DNS records that get added to my Hosted Zone automatically by Terraform. This is all still part of this single module. Cool.

This is starting to look reasonable, but only for my own purposes. I can’t possibly publish this as something for others to consume, it’s still too amateur.

I go down a new rabbit hole of configuring GitHub Actions for PR Testing, as well as pre-commit hooks for my local development. This prevents me from commiting anything that doesn’t automatically update the Terraform module documentation with terraform-docs, pass tfsec tests, tflint, yamllint, jsonlint, and anything else that I could find to stuff in there during one mad weekend. I learn much about GitHub Actions.

I keep thinking of new things to add and tweak. What if users don’t want CloudFront to use all edge locations? Added. What if we want to restore our RDS from an existing snapshot? Added. What about retention policies for all of the default CloudWatch log groups? If we don’t cover that they’ll grow forever. We’re trying to save money here. Added, and I’m wondering if I’ll ever finish.

But finish I must, because I’m not exactly being agile here. The MVP was cooked a long time ago and I really need to release a 0.1.0 instead of trying to create 1.0.0 before I’ve had any feedback. Although at some point I go back and add support for encrypted EFS with automatic transitions to a cheaper, infrequent access storage tier. We’re not going to get costs down to $0.01 a day by skipping out on such optimizations!

And whilst security here is pretty tight all around…. I just can’t help but add an optional Web Application Firewall (WAF) module. WAF use also gets covered by my Organization’s CloudFront Security Savings Bundle so it’s really the least I should do. Beware though, WAF has fixed costs which completely break the ‘$0.01 a day’ clickbait.

I’m getting a bit tired so I have to put the module down for a couple of weeks to pass the bar as an AWS Authorized Instructor (AAI). The depth of knowledge required feels harder than any AWS Certification Exam I’ve ever sat.

But I get back to the module, and I think I’m done. Done enough. For today.

Finally, I publish the module, and immediately populate it with a bunch of issues for future improvements. We’re not going to get costs down to $0.00 a day by slacking!

—

Pete Wilcock is a 9x AWS Certified DevOps Consultant, AWS Community Builder, and Technical Writer for TechToSpeech. If I’m not possibly losing my mind and definitely my social life buried in some project, you can find me on LinkedIn, Twitter, GitHub, or my personal site.